Bareos ®

Backup Archiving REcovery Open Sourced

Main Reference

Bareos GmbH & Co KG

This manual documents Bareos version master (January 10, 2019)

Copyright © 1999-2012, Free Software Foundation Europe e.V.

Copyright © 2010-2012, Planets Communications B.V.

Copyright © 2013-2018, Bareos GmbH & Co. KG

Bareos ® is a registered trademark of Bareos GmbH & Co KG.

Bacula ® is a registered trademark of Kern Sibbald.

Permission is granted to copy, distribute and/or modify this document under the terms of

the GNU Free Documentation License, Version 1.2 published by the Free Software

Foundation; with no Invariant Sections, no Front-Cover Texts, and no Back-Cover Texts. A

copy of the license is included in the section entitled ”GNU Free Documentation License”.

#

Bareos is a set of computer programs that permits the system administrator to manage backup, recovery,

and verification of computer data across a network of computers of different kinds. Bareos can also

run entirely upon a single computer and can backup to various types of media, including tape and

disk.

In technical terms, it is a network Client/Server based backup program. Bareos is relatively easy to use and efficient,

while offering many advanced storage management features that make it easy to find and recover lost or damaged

files. Due to its modular design, Bareos is scalable from small single computer systems to systems consisting of

hundreds of computers located over a large network.

#

Bareos is a fork of the open source project Bacula version 5.2. In 2010 the Bacula community developer Marco

van Wieringen started to collect rejected or neglected community contributions in his own branch. This branch was

later on the base of Bareos and since then was enriched by a lot of new features.

This documentation also bases on the original Bacula documentation, it is technically also a fork of the

documenation created following the rules of the GNU Free Documentation License.

Original author of Bacula and its documentation is Kern Sibbald. We thank Kern and all contributors to Bacula

and it’s documentation. We maintain a list of contributors to Bacula (until the time we’ve started the fork) and

Bareos in our AUTHORS file.

#

If you are currently using a program such as tar, dump, or bru to backup your computer data, and you would like a

network solution, more flexibility, or catalog services, Bareos will most likely provide the additional features you

want. However, if you are new to Unix systems or do not have offsetting experience with a sophisticated backup

package, the Bareos project does not recommend using Bareos as it is much more difficult to setup and use than tar

or dump.

If you want Bareos to behave like the above mentioned simple programs and write over any tape that you put in the

drive, then you will find working with Bareos difficult. Bareos is designed to protect your data following the rules

you specify, and this means reusing a tape only as the last resort. It is possible to ”force” Bareos to write over

any tape in the drive, but it is easier and more efficient to use a simpler program for that kind of

operation.

If you would like a backup program that can write to multiple volumes (i.e. is not limited by your tape drive

capacity), Bareos can most likely fill your needs.

If you are currently using a sophisticated commercial package such as Legato Networker, ARCserveIT,

Arkeia, IBM Tivoli Storage Manager or PerfectBackup+, you may be interested in Bareos, which

provides many of the same features and is free software available under the GNU AGPLv3 software

license.

#

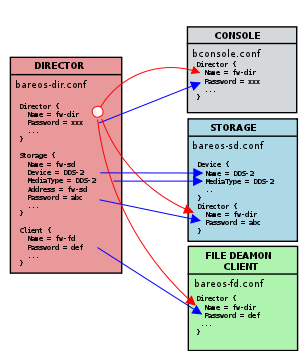

Bareos is made up of the following major components or services: Director, Console, File, Storage, and Monitor

services.

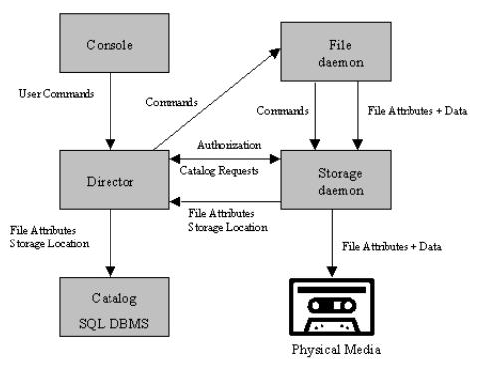

The Director is the central control program for all the other daemons. It schedules and supervises all the

backup, restore, verify and archive operations. The system administrator uses the Bareos Director to

schedule backups and to recover files. The Director runs as a daemon (or service) in the background.

The Bareos Console (bconsole) is the program that allows the administrator or user to communicate with the

Bareos Director. It runs in a shell window (i.e. TTY interface). Most system administrators will find this completely

adequate. For more details see the Bareos Console.

The Bareos File Daemon is a program that must be installed on each (Client) machine that should be backed up.

At the request of the Bareos Director, it finds the files to be backed up and sends them (their data) to the Bareos

Storage Daemon.

It is specific to the operating system on which it runs and is responsible for providing the file attributes and data

when requested by the Bareos Director.

The Bareos File Daemon is also responsible for the file system dependent part of restoring the file attributes

and data during a recovery operation. This program runs as a daemon on the machine to be backed

up.

The Bareos Storage Daemon is responsible, at the Bareos Director request, for accepting data from a Bareos File

Daemon and storing the file attributes and data to the physical backup media or volumes. In the case of a restore

request, it is responsible to find the data and send it to the Bareos File Daemon.

There can be multiple Bareos Storage Daemon in your environment, all controlled by the same Bareos

Director.

The Storage services runs as a daemon on the machine that has the backup device (such as a tape

drive).

The Catalog services are comprised of the software programs responsible for maintaining the file indexes and

volume databases for all files backed up. The Catalog services permit the system administrator or user to quickly

locate and restore any desired file. The Catalog services sets Bareos apart from simple backup programs

like tar and bru, because the catalog maintains a record of all Volumes used, all Jobs run, and all

Files saved, permitting efficient restoration and Volume management. Bareos currently supports three

different databases, MySQL, PostgreSQL, and SQLite, one of which must be chosen when building

Bareos.

The three SQL databases currently supported (MySQL, PostgreSQL or SQLite) provide quite a number of features,

including rapid indexing, arbitrary queries, and security. Although the Bareos project plans to support other

major SQL databases, the current Bareos implementation interfaces only to MySQL, PostgreSQL and

SQLite.

To perform a successful save or restore, the following four daemons must be configured and running: the

Director daemon, the File daemon, the Storage daemon, and the Catalog service (MySQL, PostgreSQL or

SQLite).

#

Bareos version numbers consists of three parts: YY.Q.C

YY | year (last two digits) |

Q | quarter of the year |

YY.Q | year and quarter of the code freeze. After this, as a general rule, no new feature should

get introduced to this Bareos branch. Subsequent release are for bugfixing. |

C | Release counter. For every subsequent release, this counter is incremented. Beginning

with 16.2, numbers from 1 to 3 represents the month of the quarter during development.

After the code freeze, the number is set to 4. So, stable releases get number from 4

onwards. Maintenance releases get numbers starting from 5 onwards. |

| |

Following information can be determined from the Bareos release bareos-16.2.4:

- 16.2: Code freeze have been in the second quarter of 2016

- 4: this is the first stable release of the bareos-16.2 branch

For details about the different releases see Release Notes.

#

Following Bareos Linux packages are available (release 17.2.4):

|

|

| Package Name | Description |

|

|

| bareos | Backup Archiving REcovery Open Sourced - metapackage |

| bareos-bconsole | Bareos administration console (CLI) |

| bareos-client | Bareos client Meta-All-In-One package |

| bareos-common | Common files, required by multiple Bareos packages |

| bareos-database-common | Generic abstraction libs and files to connect to a database |

| bareos-database-mysql | Libs and tools for mysql catalog |

| bareos-database-postgresql | Libs and tools for postgresql catalog |

| bareos-database-sqlite3 | Libs and tools for sqlite3 catalog |

| bareos-database-tools | Bareos CLI tools with database dependencies (bareos-dbcheck, bscan) |

| bareos-devel | Devel headers |

| bareos-director | Bareos Director daemon |

| bareos-director-python-plugin | Python plugin for Bareos Director daemon |

| bareos-filedaemon | Bareos File daemon (backup and restore client) |

| bareos-filedaemon-ceph-plugin | CEPH plugin for Bareos File daemon |

| bareos-filedaemon-glusterfs-plugin | GlusterFS plugin for Bareos File daemon |

| bareos-filedaemon-ldap-python-plugin | LDAP Python plugin for Bareos File daemon |

| bareos-filedaemon-python-plugin | Python plugin for Bareos File daemon |

| bareos-regress-config | Required files for bareos-regress |

| bareos-storage | Bareos Storage daemon |

| bareos-storage-ceph | CEPH support for the Bareos Storage daemon |

| bareos-storage-droplet | Object Storage support (through libdroplet) for the Bareos Storage daemon |

| bareos-storage-fifo | FIFO support for the Bareos Storage backend |

| bareos-storage-glusterfs | GlusterFS support for the Bareos Storage daemon |

| bareos-storage-python-plugin | Python plugin for Bareos Storage daemon |

| bareos-storage-tape | Tape support for the Bareos Storage daemon |

| bareos-tools | Bareos CLI tools (bcopy, bextract, bls, bregex, bwild) |

| bareos-traymonitor | Bareos Tray Monitor (QT) |

| bareos-vadp-dumper | VADP Dumper - vStorage APIs for Data Protection Dumper program |

| bareos-vmware-plugin | Bareos VMware plugin |

| bareos-vmware-plugin-compat | Bareos VMware plugin compatibility |

| bareos-vmware-vix-disklib | VMware vix disklib distributable libraries |

| bareos-webui | Bareos Web User Interface |

| python-bareos | Backup Archiving REcovery Open Sourced - Python module |

|

|

| |

Not all packages (especially optional backends and plugins) are available on all platforms. For details, see Packages

for the different Linux platforms.

Additionally, packages containing debug information are available. These are named differently depending on the

distribution (bareos-debuginfo or bareos-dbg or …).

Not all packages are required to run Bareos.

- For the Bareos Director, the package bareos-director and one of bareos-database-postgresql,

bareos-database-mysql or bareos-database-sqlite3 are required. It is recommended to use

bareos-database-postgresql.

- For the Bareos Storage Daemon, the package bareos-storage is required. If you plan to connect tape

drives to the storage director, also install the package bareos-storage-tape. This is kept separately,

because it has additional dependencies for tape tools.

- On a client, only the package bareos-filedaemon is required. If you run it on a workstation, the

packages bareos-traymonitor gives the user information about running backups.

- On a Backup Administration system you need to install at least bareos-bconsole to have an interactive

console to the Bareos Director.

#

To get Bareos up and running quickly, the author recommends that you first scan the Terminology section below,

then quickly review the next chapter entitled The Current State of Bareos, then the Installing Bareos, the

Getting Started with Bareos, which will give you a quick overview of getting Bareos running. After

which, you should proceed to the chapter How to Configure Bareos, and finally the chapter on Running

Bareos.

#

-

Administrator

- The person or persons responsible for administrating the Bareos system.

-

Backup

- The term Backup refers to a Bareos Job that saves files.

-

Bootstrap File

- The bootstrap file is an ASCII file containing a compact form of commands that allow

Bareos or the stand-alone file extraction utility (bextract) to restore the contents of one or more

Volumes, for example, the current state of a system just backed up. With a bootstrap file, Bareos can

restore your system without a Catalog. You can create a bootstrap file from a Catalog to extract any

file or files you wish.

-

Catalog

- The Catalog is used to store summary information about the Jobs, Clients, and Files that

were backed up and on what Volume or Volumes. The information saved in the Catalog permits

the administrator or user to determine what jobs were run, their status as well as the important

characteristics of each file that was backed up, and most importantly, it permits you to choose what

files to restore. The Catalog is an online resource, but does not contain the data for the files backed

up. Most of the information stored in the catalog is also stored on the backup volumes (i.e. tapes). Of

course, the tapes will also have a copy of the file data in addition to the File Attributes (see below).

The catalog feature is one part of Bareos that distinguishes it from simple backup and archive programs

such as dump and tar.

-

Client

- In Bareos’s terminology, the word Client refers to the machine being backed up, and it is synonymous

with the File services or File daemon, and quite often, it is referred to it as the FD. A Client is defined

in a configuration file resource.

-

Console

- The program that interfaces to the Director allowing the user or system administrator to control

Bareos.

-

Daemon

- Unix terminology for a program that is always present in the background to carry out a designated

task. On Windows systems, as well as some Unix systems, daemons are called Services.

-

Directive

- The term directive is used to refer to a statement or a record within a Resource in a configuration

file that defines one specific setting. For example, the Name directive defines the name of the Resource.

-

Director

- The main Bareos server daemon that schedules and directs all Bareos operations. Occasionally,

the project refers to the Director as DIR.

-

Differential

- A backup that includes all files changed since the last Full save started. Note, other backup

programs may define this differently.

-

File Attributes

- The File Attributes are all the information necessary about a file to identify it and all its

properties such as size, creation date, modification date, permissions, etc. Normally, the attributes are

handled entirely by Bareos so that the user never needs to be concerned about them. The attributes

do not include the file’s data.

-

File daemon

- The daemon running on the client computer to be backed up. This is also referred to as the

File services, and sometimes as the Client services or the FD.

-

FileSet

- A FileSet is a Resource contained in a configuration file that defines the files to be backed up.

It consists of a list of included files or directories, a list of excluded files, and how the file is to be

stored (compression, encryption, signatures). For more details, see the FileSet Resource in the Director

chapter of this document.

-

Incremental

- A backup that includes all files changed since the last Full, Differential, or Incremental backup

started. It is normally specified on the Level directive within the Job resource definition, or in a

Schedule resource.

-

Job

- A Bareos Job is a configuration resource that defines the work that Bareos must perform to backup

or restore a particular Client. It consists of the Type (backup, restore, verify, etc), the Level (full,

differential, incremental, etc.), the FileSet, and Storage the files are to be backed up (Storage device,

Media Pool). For more details, see the Job Resource in the Director chapter of this document.

-

Monitor

- The program that interfaces to all the daemons allowing the user or system administrator to

monitor Bareos status.

-

Resource

- A resource is a part of a configuration file that defines a specific unit of information that is

available to Bareos. It consists of several directives (individual configuration statements). For example,

the Job resource defines all the properties of a specific Job: name, schedule, Volume pool, backup type,

backup level, ...

-

Restore

- A restore is a configuration resource that describes the operation of recovering a file from backup

media. It is the inverse of a save, except that in most cases, a restore will normally have a small set

of files to restore, while normally a Save backs up all the files on the system. Of course, after a disk

crash, Bareos can be called upon to do a full Restore of all files that were on the system.

-

Schedule

- A Schedule is a configuration resource that defines when the Bareos Job will be scheduled for

execution. To use the Schedule, the Job resource will refer to the name of the Schedule. For more

details, see the Schedule Resource in the Director chapter of this document.

-

Service

- This is a program that remains permanently in memory awaiting instructions. In Unix

environments, services are also known as daemons.

-

Storage Coordinates

- The information returned from the Storage Services that uniquely locates a file

on a backup medium. It consists of two parts: one part pertains to each file saved, and the other

part pertains to the whole Job. Normally, this information is saved in the Catalog so that the user

doesn’t need specific knowledge of the Storage Coordinates. The Storage Coordinates include the File

Attributes (see above) plus the unique location of the information on the backup Volume.

-

Storage Daemon

- The Storage daemon, sometimes referred to as the SD, is the code that writes the

attributes and data to a storage Volume (usually a tape or disk).

-

Session

- Normally refers to the internal conversation between the File daemon and the Storage daemon.

The File daemon opens a session with the Storage daemon to save a FileSet or to restore it. A session

has a one-to-one correspondence to a Bareos Job (see above).

-

Verify

- A verify is a job that compares the current file attributes to the attributes that have previously been

stored in the Bareos Catalog. This feature can be used for detecting changes to critical system files

similar to what a file integrity checker like Tripwire does. One of the major advantages of using Bareos

to do this is that on the machine you want protected such as a server, you can run just the File daemon,

and the Director, Storage daemon, and Catalog reside on a different machine. As a consequence, if

your server is ever compromised, it is unlikely that your verification database will be tampered with.

Verify can also be used to check that the most recent Job data written to a Volume agrees with what is

stored in the Catalog (i.e. it compares the file attributes), *or it can check the Volume contents against

the original files on disk.

-

Retention Period

- There are various kinds of retention periods that Bareos recognizes. The most important

are the File Retention Period, Job Retention Period, and the Volume Retention Period. Each of

these retention periods applies to the time that specific records will be kept in the Catalog database.

This should not be confused with the time that the data saved to a Volume is valid.

The File Retention Period determines the time that File records are kept in the catalog database. This

period is important for two reasons: the first is that as long as File records remain in the database,

you can ”browse” the database with a console program and restore any individual file. Once the File

records are removed or pruned from the database, the individual files of a backup job can no longer be

”browsed”. The second reason for carefully choosing the File Retention Period is because the volume

of the database File records use the most storage space in the database. As a consequence, you must

ensure that regular ”pruning” of the database file records is done to keep your database from growing

too large. (See the Console prune command for more details on this subject).

The Job Retention Period is the length of time that Job records will be kept in the database. Note, all

the File records are tied to the Job that saved those files. The File records can be purged leaving the

Job records. In this case, information will be available about the jobs that ran, but not the details of

the files that were backed up. Normally, when a Job record is purged, all its File records will also be

purged.

The Volume Retention Period is the minimum of time that a Volume will be kept before it is reused.

Bareos will normally never overwrite a Volume that contains the only backup copy of a file. Under

ideal conditions, the Catalog would retain entries for all files backed up for all current Volumes. Once a

Volume is overwritten, the files that were backed up on that Volume are automatically removed from the

Catalog. However, if there is a very large pool of Volumes or a Volume is never overwritten, the Catalog

database may become enormous. To keep the Catalog to a manageable size, the backup information

should be removed from the Catalog after the defined File Retention Period. Bareos provides the

mechanisms for the catalog to be automatically pruned according to the retention periods defined.

-

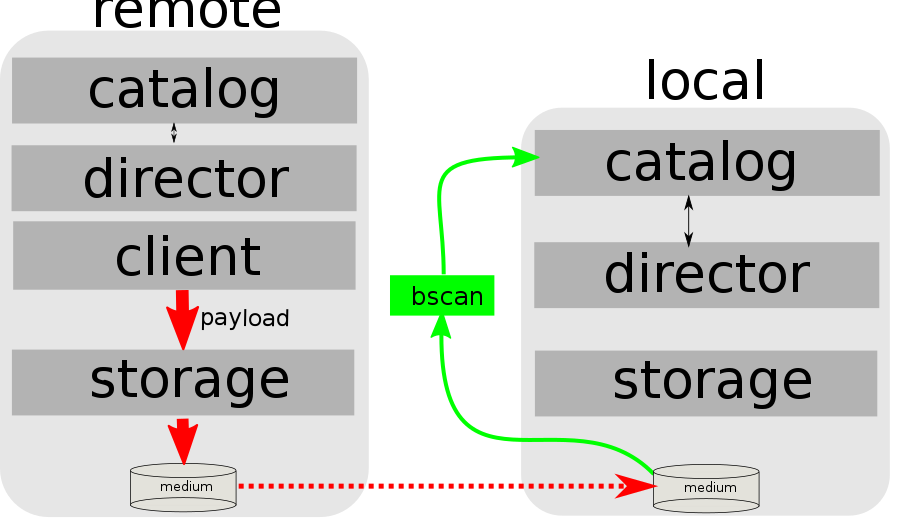

Scan

- A Scan operation causes the contents of a Volume or a series of Volumes to be scanned. These

Volumes with the information on which files they contain are restored to the Bareos Catalog. Once the

information is restored to the Catalog, the files contained on those Volumes may be easily restored.

This function is particularly useful if certain Volumes or Jobs have exceeded their retention period and

have been pruned or purged from the Catalog. Scanning data from Volumes into the Catalog is done

by using the bscan program. See the bscan section of the Bareos Utilities chapter of this manual for

more details.

-

Volume

- A Volume is an archive unit, normally a tape or a named disk file where Bareos stores the data

from one or more backup jobs. All Bareos Volumes have a software label written to the Volume by

Bareos so that it identifies what Volume it is really reading. (Normally there should be no confusion

with disk files, but with tapes, it is easy to mount the wrong one.)

#

Bareos is a backup, restore and verification program and is not a complete disaster recovery system in itself, but it

can be a key part of one if you plan carefully and follow the instructions included in the Disaster Recovery chapter

of this manual.

#

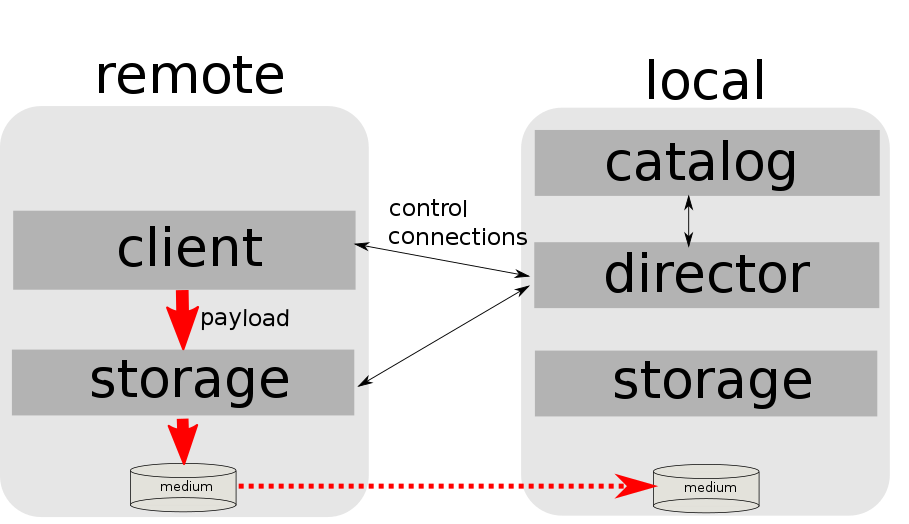

The following block diagram shows the typical interactions between the Bareos Services for a backup job. Each

block represents in general a separate process (normally a daemon). In general, the Director oversees the flow of

information. It also maintains the Catalog.

#

#

- Job Control

- Network backup/restore with centralized Director.

- Internal scheduler for automatic Job execution.

- Scheduling of multiple Jobs at the same time.

- You may run one Job at a time or multiple simultaneous Jobs (sometimes called multiplexing).

- Job sequencing using priorities.

- Console interface to the Director allowing complete control. Some GUIs are also available.

- Security

- Verification of files previously cataloged, permitting a Tripwire like capability (system break-in

detection).

- CRAM-MD5 password authentication between each component (daemon).

- Configurable TLS (SSL) communications encryption between each component.

- Configurable Data (on Volume) encryption on a Client by Client basis.

- Computation of MD5 or SHA1 signatures of the file data if requested.

- Restore Features

- Restore of one or more files selected interactively either for the current backup or a backup prior

to a specified time and date.

- Listing and Restoration of files using stand-alone bls and bextract tool programs. Among other

things, this permits extraction of files when Bareos and/or the catalog are not available. Note, the

recommended way to restore files is using the restore command in the Console. These programs

are designed for use as a last resort.

- Ability to restore the catalog database rapidly by using bootstrap files (previously saved).

- Ability to recreate the catalog database by scanning backup Volumes using the bscan program.

- SQL Catalog

- Catalog database facility for remembering Volumes, Pools, Jobs, and Files backed up.

- Support for PostgreSQL, MySQL and SQLite Catalog databases.

- User extensible queries to the PostgreSQL, MySQL and SQLite databases.

- Advanced Volume and Pool Management

- Labeled Volumes, preventing accidental overwriting (at least by Bareos).

- Any number of Jobs and Clients can be backed up to a single Volume. That is, you can backup

and restore Linux, Unix and Windows machines to the same Volume.

- Multi-volume saves. When a Volume is full, Bareos automatically requests the next Volume and

continues the backup.

- Pool and Volume library management providing Volume flexibility (e.g. monthly, weekly, daily

Volume sets, Volume sets segregated by Client, ...).

- Machine independent Volume data format. Linux, Solaris, and Windows clients can all be backed

up to the same Volume if desired.

- The Volume data format is upwards compatible so that old Volumes can always be read.

- A flexible message handler including routing of messages from any daemon back to the Director

and automatic email reporting.

- Data spooling to disk during backup with subsequent write to tape from the spooled disk files.

This prevents tape ”shoe shine” during Incremental/Differential backups.

- Advanced Support for most Storage Devices

- Autochanger support using a simple shell interface that can interface to virtually any autoloader

program. A script for mtx is provided.

- Support for autochanger barcodes – automatic tape labeling from barcodes.

- Automatic support for multiple autochanger magazines either using barcodes or by reading the

tapes.

- Support for multiple drive autochangers.

- Raw device backup/restore. Restore must be to the same device.

- All Volume blocks contain a data checksum.

- Migration support – move data from one Pool to another or one Volume to another.

- Multi-Operating System Support

- Programmed to handle arbitrarily long filenames and messages.

- Compression on a file by file basis done by the Client program if requested before network transit.

- Saves and restores POSIX ACLs and Extended Attributes on most OSes if enabled.

- Access control lists for Consoles that permit restricting user access to only their data.

- Support for save/restore of files larger than 2GB.

- Support ANSI and IBM tape labels.

- Support for Unicode filenames (e.g. Chinese) on Win32 machines

- Consistent backup of open files on Win32 systems using Volume Shadow Copy (VSS).

- Support for path/filename lengths of up to 64K on Win32 machines (unlimited on Unix/Linux

machines).

- Miscellaneous

- Multi-threaded implementation.

#

- Bareos handles multi-volume backups.

- A full comprehensive SQL standard database of all files backed up. This permits online viewing of files

saved on any particular Volume.

- Automatic pruning of the database (removal of old records) thus simplifying database administration.

- The modular but integrated design makes Bareos very scalable.

- Bareos has a built-in Job scheduler.

- The Volume format is documented and there are simple C programs to read/write it.

- Bareos uses well defined (IANA registered) TCP/IP ports – no rpcs, no shared memory.

- Bareos installation and configuration is relatively simple compared to other comparable products.

- Aside from several GUI administrative interfaces, Bareos has a comprehensive shell administrative

interface, which allows the administrator to use tools such as ssh to administrate any part of Bareos

from anywhere.

#

- It is possible to configure the Bareos Director to use multiple Catalogs. However, this is neither

advised, nor supported. Multiple catalogs require more management because in general you must know

what catalog contains what data, e.g. currently, all Pools are defined in each catalog.

- Bareos can generally restore any backup made from one client to any other client. However, if the

architecture is significantly different (i.e. 32 bit architecture to 64 bit or Win32 to Unix), some

restrictions may apply (e.g. Solaris door files do not exist on other Unix/Linux machines; there are

reports that Zlib compression written with 64 bit machines does not always read correctly on a 32 bit

machine).

#

- Names (resource names, volume names, and such) defined in Bareos configuration files are limited to a

fixed number of characters. Currently the limit is defined as 127 characters. Note, this does not apply

to filenames, which may be arbitrarily long.

- Command line input to some of the stand alone tools – e.g. btape, bconsole is restricted to several

hundred characters maximum. Normally, this is not a restriction, except in the case of listing multiple

Volume names for programs such as bscan. To avoid this command line length restriction, please use

a .bsr file to specify the Volume names.

- Bareos configuration files for each of the components can be any length. However, the length of an

individual line is limited to 500 characters after which it is truncated. If you need lines longer than 500

characters for directives such as ACLs where they permit a list of names are character strings simply

specify multiple short lines repeating the directive on each line but with different list values.

#

- Bareos’s Differential and Incremental normal backups are based on time stamps. Consequently, if you

move files into an existing directory or move a whole directory into the backup fileset after a Full

backup, those files will probably not be backed up by an Incremental save because they will have

old dates. This problem is corrected by using Accurate mode backups or by explicitly updating the

date/time stamp on all moved files.

- In non Accurate mode, files deleted after a Full save will be included in a restoration. This is typical

for most similar backup programs. To avoid this, use Accurate mode backup.

#

If you are like me, you want to get Bareos running immediately to get a feel for it, then later you want to go back

and read about all the details. This chapter attempts to accomplish just that: get you going quickly without all the

details.

Bareos comes prepackaged for a number of Linux distributions. So the easiest way to get to a running Bareos

installation, is to use a platform where prepacked Bareos packages are available. Additional information can be

found in the chapter Operating Systems.

If Bareos is available as a package, only 4 steps are required to get to a running Bareos system:

- Decide about the Bareos release to use

- Install the Bareos Software Packages

- Prepare Bareos database

- Start the daemons

This will start a very basic Bareos installation which will regularly backup a directory to disk. In

order to fit it to your needs, you’ll have to adapt the configuration and might want to backup other

clients.

#

You’ll find Bareos binary package repositories at http://download.bareos.org/. The latest stable released version

is available at http://download.bareos.org/bareos/release/latest/.

The public key to verify the repository is also in repository directory (Release.key for Debian based distributions,

repodata/repomd.xml.key for RPM based distributions).

Section Install the Bareos Software Packages describes how to add the software repository to your

system.

#

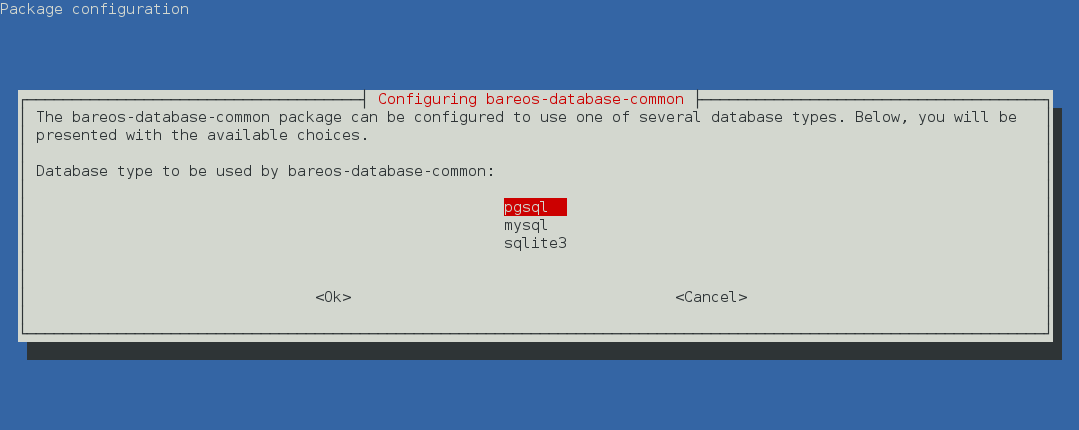

Bareos offers the following database backends:

- PostgreSQL by package bareos-database-postgresql. This is the recommended backend.

- MariaDB/MySQL by package bareos-database-mysql

- Sqlite by package bareos-database-sqlite3

Please note! The Sqlite backend is only intended for testing, not for productive use.

PostgreSQL

is the default backend.

MariaDB/MySQL

backend is also included.

Sqlite

backend is intended for testing purposes only.

The Bareos database packages have there dependencies only to the database client packages, therefore the database

itself must be installed manually.

If you do not explicitly choose a database backend, your operating system installer will choose one for you. The

default should be PostgreSQL, but depending on your operating system and the already installed packages, this may

differ.

#

The package bareos is only a meta package, that contains dependencies to the main components of Bareos, see

Bareos Packages. If you want to setup a distributed environment (like one Director, separate database server,

multiple Storage daemons) you have to choose the corresponding Bareos packages to install on each hosts instead of

just installing the bareos package.

#

Bareos Version >= 15.2.0 requires the Jansson library package. On RHEL 7 it is available through the RHEL Server

Optional channel. On CentOS 7 and Fedora is it included on the main repository.

#

# define parameter

#

DIST=RHEL_7

# or

# DIST=CentOS_7

# DIST=Fedora_26

# DIST=Fedora_25

RELEASE=release/17.2/

# or

# RELEASE=release/latest/

# RELEASE=experimental/nightly/

# add the Bareos repository

URL=http://download.bareos.org/bareos/$RELEASE/$DIST

wget -O /etc/yum.repos.d/bareos.repo $URL/bareos.repo

# install Bareos packages

yum install bareos bareos-database-postgresql

Commands 2.1:

Bareos installation on RHEL ≥ 7 / CentOS ≥ 7 / Fedora

Bareos Version >= 15.2.0 requires the Jansson library package. This package is available on EPEL 6. Make sure, it

is available on your system.

#

# add EPEL repository, if not already present.

# Required for the jansson package.

#

rpm -Uhv https://dl.fedoraproject.org/pub/epel/epel-release-latest-6.noarch.rpm

#

# define parameter

#

DIST=RHEL_6

# DIST=CentOS_6

RELEASE=release/17.2/

# or

# RELEASE=release/latest/

# RELEASE=experimental/nightly/

# add the Bareos repository

URL=http://download.bareos.org/bareos/$RELEASE/$DIST

wget -O /etc/yum.repos.d/bareos.repo $URL/bareos.repo

# install Bareos packages

yum install bareos bareos-database-postgresql

Commands 2.2:

Bareos installation on RHEL ≥ 6 / CentOS ≥ 6

yum in RHEL 5/CentOS 5 has slightly different behaviour as far as dependency resolving is concerned: it sometimes

install a dependent package after the one that has the dependency defined. To make sure that it works, install the

desired Bareos database backend package first in a separate step:

#

# define parameter

#

DIST=RHEL_5

RELEASE=release/17.2/

# or

# RELEASE=release/latest/

# RELEASE=experimental/nightly/

# add the Bareos repository

URL=http://download.bareos.org/bareos/$RELEASE/$DIST

wget -O /etc/yum.repos.d/bareos.repo $URL/bareos.repo

# install Bareos packages

yum install bareos-database-postgresql

yum install bareos

Commands 2.3:

Bareos installation on RHEL 5 / CentOS 5

#

#

# define parameter

#

DIST=SLE_12_SP3

# or

# DIST=SLE_12_SP2

# DIST=SLE_12_SP1

# DIST=SLE_11_SP4

# DIST=openSUSE_Leap_42.3

# DIST=openSUSE_Leap_42.2

RELEASE=release/17.2/

# or

# RELEASE=release/latest/

# RELEASE=experimental/nightly/

# add the Bareos repository

URL=http://download.bareos.org/bareos/$RELEASE/$DIST

zypper addrepo --refresh $URL/bareos.repo

# install Bareos packages

zypper install bareos bareos-database-postgresql

Commands 2.4:

Bareos installation on SLES / openSUSE

#

Bareos Version >= 15.2.0 requires the Jansson library package. On Ubuntu is it available in Ubuntu Universe. In

Debian, is it included in the main repository.

#

# define parameter

#

DIST=Debian_9.0

# or

# DIST=Debian_8.0

# DIST=xUbuntu_16.04

# DIST=xUbuntu_14.04

# DIST=xUbuntu_12.04

RELEASE=release/17.2/

# or

# RELEASE=release/latest/

# RELEASE=experimental/nightly/

URL=http://download.bareos.org/bareos/$RELEASE/$DIST

# add the Bareos repository

printf "deb $URL /\n" > /etc/apt/sources.list.d/bareos.list

# add package key

wget -q $URL/Release.key -O- | apt-key add -

# install Bareos packages

apt-get update

apt-get install bareos bareos-database-postgresql

Commands 2.5:

Bareos installation on Debian / Ubuntu

If you prefer using the versions of Bareos directly integrated into the distributions, please note that there are some

differences, see Limitations of the Debian.org/Ubuntu Universe version of Bareos.

#

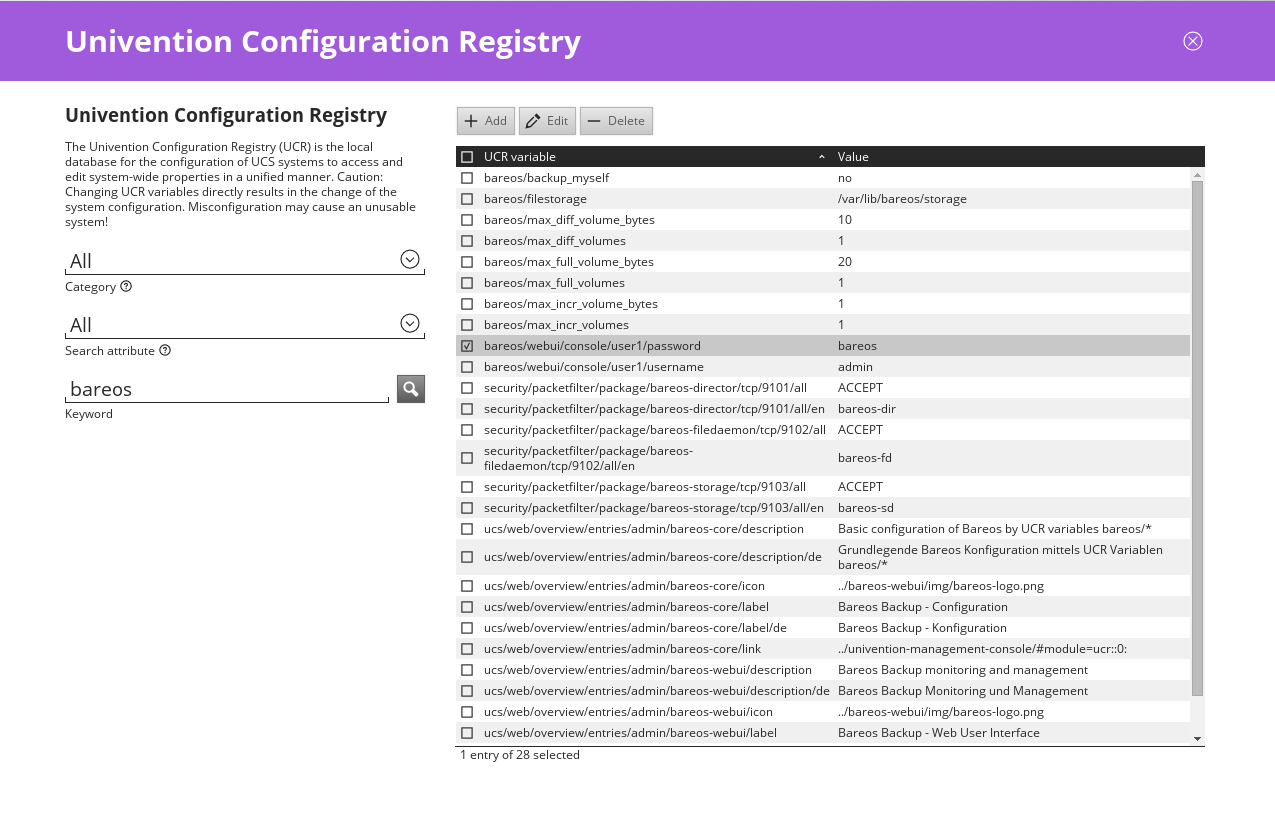

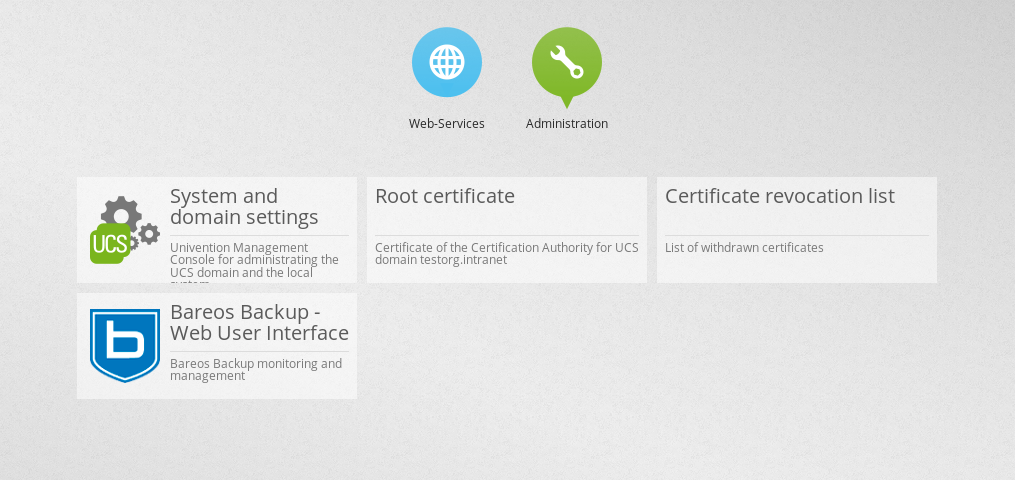

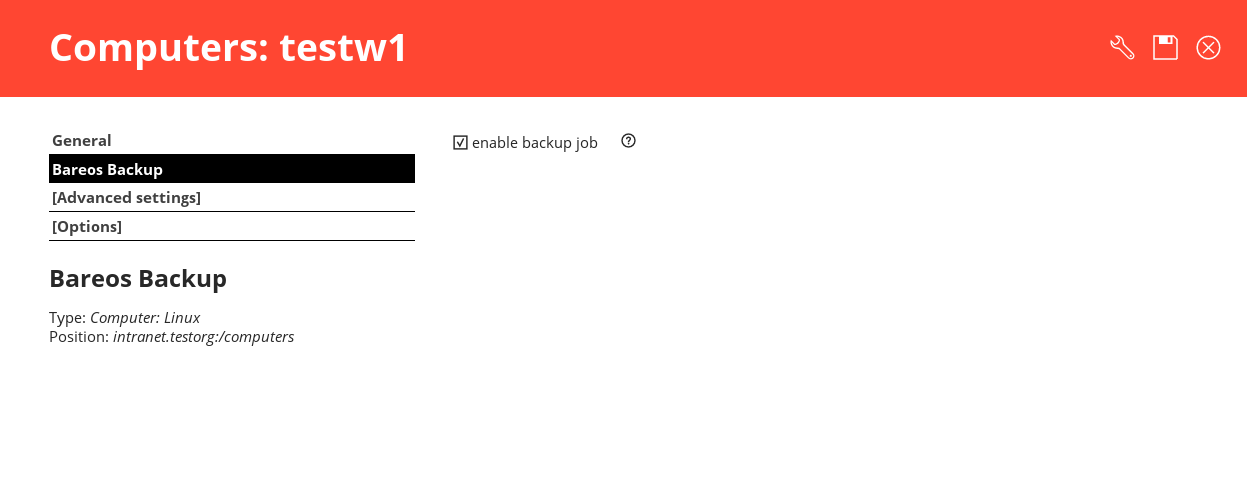

Univention

Bareos offers additional functionality and integration into an Univention Corporate Server environment. Please

follow the intructions in Univention Corporate Server.

If you are not interested in this additional functionality, the commands described in Install on Debian based Linux

Distributions will also work for Univention Corporate Servers.

#

We assume that you have already your database installed and basically running. Using the PostgreSQL database

backend is recommended.

The easiest way to set up a database is using an system account that have passwordless local access to the database.

Often this is the user root for MySQL and the user postgres for PostgreSQL.

For details, see chapter Catalog Maintenance.

#

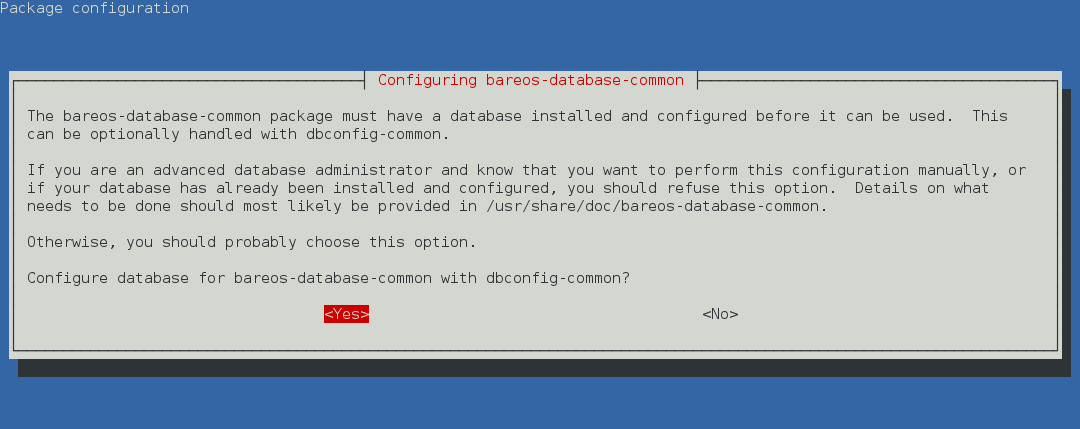

Since Bareos Version >= 14.2.0 the Debian (and Ubuntu) based packages support the dbconfig-common mechanism

to create and update the Bareos database.

Follow the instructions during install to configure it according to your needs.

If you decide not to use dbconfig-common (selecting <No> on the initial dialog), follow the instructions for Other

Platforms.

The selectable database backends depend on the bareos-database-* packages installed.

For details see dbconfig-common (Debian).

#

If your are using PostgreSQL and your PostgreSQL administration user is postgres (default), use following

commands:

su postgres -c /usr/lib/bareos/scripts/create_bareos_database

su postgres -c /usr/lib/bareos/scripts/make_bareos_tables

su postgres -c /usr/lib/bareos/scripts/grant_bareos_privileges

Commands 2.6:

Setup Bareos catalog with PostgreSQL

Make sure, that root has direct access to the local MySQL server. Check if the command mysql connects to the

database without defining the password. This is the default on RedHat and SUSE distributions. On other systems

(Debian, Ubuntu), create the file ~/.my.cnf with your authentication informations:

[client]

host=localhost

user=root

password=YourPasswordForAccessingMysqlAsRoot

Configuration 2.7:

MySQL

credentials

file

.my.cnf

It is recommended, to secure the Bareos database connection with a password. See Catalog Maintenance – MySQL

about how to archieve this. For testing, using a password-less MySQL connection is probable okay. Setup the Bareos

database tables by following commands:

/usr/lib/bareos/scripts/create_bareos_database

/usr/lib/bareos/scripts/make_bareos_tables

/usr/lib/bareos/scripts/grant_bareos_privileges

Commands 2.8:

Setup Bareos catalog with MySQL

As some Bareos updates require a database schema update, therefore the file /root/.my.cnf might also be useful in

the future.

#

service bareos-dir start

service bareos-sd start

service bareos-fd start

Commands 2.9:

Start the Bareos Daemons

You will eventually have to allow access to the ports 9101-9103, used by Bareos.

Now you should be able to access the director using the bconsole.

When you want to use the bareos-webui, please refer to the chapter Installing Bareos Webui.

#

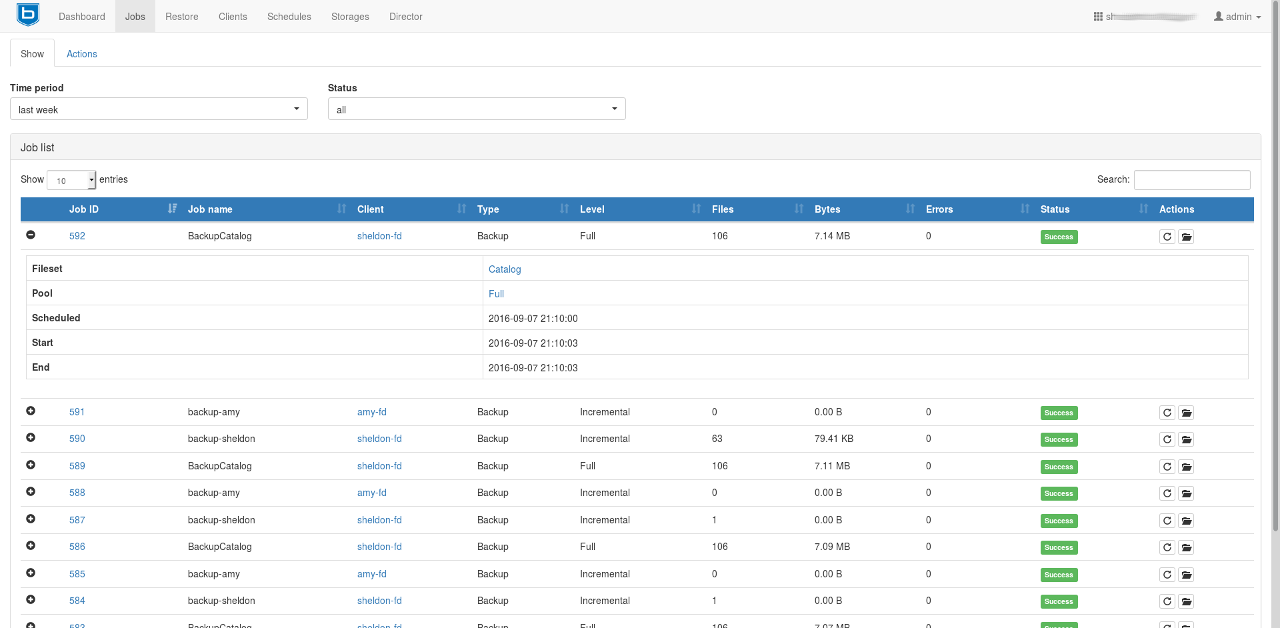

This chapter addresses the installation process of the Bareos Webui.

Since Version >= 15.2.0 Bareos Webui is part of the Bareos project and available for a number of

platforms.

#

- Intuitive web interface

- Multilinugual

- Can access multiple directors and catalogs

- Individual accounts and ACL support via Bareos restricted named consoles

- Tape Autochanger management, with the possibility to label, import/export media and update your

autochanger slot status

- Temporarly enable or disable jobs, clients and schedules and also see their current state

- Show

- Detailed information about Jobs, Clients, Filesets, Pools, Volumes, Storages, Schedules, Logs and

Director messages

- Filedaemon, Storage- and Director updates

- Client, Director, Storage and Scheduler status

- Backup Jobs

- Start, cancel, rerun and restore from.

- Show the file list of backup jobs

- Restore files by browsing through a filetree of your backup jobs.

- Merge your backup jobs history and filesets of a client or use a single backup job for restore.

- Restore files to a different client instead of the origin

- bconsole interface (limited to non-interactive commands)

#

- A platform, for which the bareos-webui package is available, see Bareos Packages.

- A working Bareos environment.

- Bareos Director version >= Bareos Webui version.

- The Bareos Webui can be installed on any host. It does not have to be installed on the same as the

Bareos Director.

- The default installation uses an Apache webserver with mod-rewrite, mod-php and mod-setenv.

- PHP >= 5.3.23

- On SUSE Linux Enterprise 12 you need the additional SUSE Linux Enterprise Module for Web Scripting

12.

#

Bareos Webui Version >= 16.2.4 incorporates the required Zend Framework 2 components, no extra Zend

Framework installation is required. For older versions of bareos-webui, you must install Zend Framework

separately. Unfortunately, not all distributions offer Zend Framework 2 packages. The following list shows where to

get the Zend Framework 2 package:

- RHEL, CentOS

- Fedora

- SUSE, Debian, Ubuntu

Also be aware, that older versions of Bareos Director do not support the Subdirectory Configuration Scheme and

therefore Bareos configuration resource files must be included manually.

#

#

If not already done, add the Bareos repository that is matching your Linux distribution. Please have

a look at the chapter Install the Bareos Software Packages for more information on how to achieve

this.

#

After adding the repository simply install the bareos-webui package via your package manager.

- RHEL, CentOS and Fedora

yum install bareos-webui

Commands 3.1:

or

dnf install bareos-webui

Commands 3.2:

#

This assumes, Bareos Director and Bareos Webui are installed on the same host.

- If you are using SELinux, allow HTTPD scripts and modules make network connections:

setsebool -P httpd_can_network_connect on

Commands 3.5:

For details, see SELinux.

- Restart Apache (to load configuration provided by bareos-webui, see Configure your Apache

Webserver)

- Use bconsole to create a user with name admin and password secret and permissions defined in

webui-adminDir

Profile:

*configure add console name=admin password=secret profile=webui-admin

bconsole 3.6:

add a named console

Of course, you can choose other names and passwords. For details, see Create a restricted consoles.

- Login to http://HOSTNAME/bareos-webui with username and password as created in 3.

#

There is not need, that Bareos Webui itself provide a user management. Instead it uses so named ConsoleDir defined

in the Bareos Director. You can have multiple consoles with different names and passwords, sort of like multiple

users, each with different privileges.

At least one ConsoleDir is required to use the Bareos Webui.

To allow a user with name admin and password secret to access the Bareos Director with permissions defined in

the webui-adminDir

Profile (see Configuration of profile resources), either

- create a file /etc/bareos/bareos-dir.d/console/admin.conf with following content:

Console {

Name = "admin"

Password = "secret"

Profile = "webui-admin"

}

Resource 3.7:

bareos-dir.d/console/admin.conf

To enable this, reload or restart your Bareos Director.

- or use the bconsole:

*configure add console name=admin password=secret profile=webui-admin

bconsole 3.8:

add console

For details, please read Console Resource.

The package bareos-webui comes with a predefined profile for Bareos Webui: webui-adminDir

Profile.

If your Bareos Webui is installed on another system than the Bareos Director, you have to copy the profile to the

Bareos Director.

This is the default profile, giving access to all Bareos resources and allowing all commands used by the Bareos

Webui:

Profile {

Name = webui-admin

CommandACL = !.bvfs_clear_cache, !.exit, !.sql, !configure, !create, !delete, !purge, !sqlquery, !umount, !unmount, *all*

Job ACL = *all*

Schedule ACL = *all*

Catalog ACL = *all*

Pool ACL = *all*

Storage ACL = *all*

Client ACL = *all*

FileSet ACL = *all*

Where ACL = *all*

Plugin Options ACL = *all*

}

Resource 3.9:

bareos-dir.d/profile/webui-admin.conf

The ProfileDir itself does not give any access to the Bareos Director, but can be used by ConsoleDir, which do give

access to the Bareos Director, see Create a restricted consoles.

For details, please read Profile Resource.

To use Bareos Director on a system with SELinux enabled, permission must be given to HTTPD to make network

connections:

setsebool -P httpd_can_network_connect on

Commands 3.10:

The package bareos-webui provides a default configuration for Apache. Depending on your distribution, it is

installed at /etc/apache2/conf.d/bareos-webui.conf, /etc/httpd/conf.d/bareos-webui.conf or

/etc/apache2/available-conf/bareos-webui.conf.

The required Apache modules, setenv, rewrite and php are enabled via package postinstall script. However, after

installing the bareos-webui package, you need to restart your Apache webserver manually.

Configure your directors in /etc/bareos-webui/directors.ini to match your settings.

The configuration file /etc/bareos-webui/directors.ini should look similar to this:

;

; Bareos WebUI Configuration File

;

; File: /etc/bareos-webui/directors.ini

;

;------------------------------------------------------------------------------

; Section localhost-dir

;------------------------------------------------------------------------------

[localhost-dir]

; Enable or disable section. Possible values are "yes" or "no", the default is "yes".

enabled = "yes"

; Fill in the IP-Address or FQDN of you director.

diraddress = "localhost"

; Default value is 9101

dirport = 9101

; Set catalog to explicit value if you have multiple catalogs

;catalog = "MyCatalog"

; TLS verify peer

; Possible values: true or false

tls_verify_peer = false

; Server can do TLS

; Possible values: true or false

server_can_do_tls = false

; Server requires TLS

; Possible values: true or false

server_requires_tls = false

; Client can do TLS

; Possible values: true or false

client_can_do_tls = false

; Client requires TLS

; Possible value: true or false

client_requires_tls = false

; Path to the certificate authority file

; E.g. ca_file = "/etc/bareos-webui/tls/BareosCA.crt"

;ca_file = ""

; Path to the cert file which needs to contain the client certificate and the key in PEM encoding

; E.g. ca_file = "/etc/bareos-webui/tls/restricted-named-console.pem"

;cert_file = ""

; Passphrase needed to unlock the above cert file if set

;cert_file_passphrase = ""

; Allowed common names

; E.g. allowed_cns = "host1.example.com"

;allowed_cns = ""

;------------------------------------------------------------------------------

; Section another-host-dir

;------------------------------------------------------------------------------

[another-host-dir]

enabled = "no"

diraddress = "192.168.120.1"

dirport = 9101

;catalog = "MyCatalog"

;tls_verify_peer = false

;server_can_do_tls = false

;server_requires_tls = false

;client_can_do_tls = false

;client_requires_tls = false

;ca_file = ""

;cert_file = ""

;cert_file_passphrase = ""

;allowed_cns = ""

Configuration 3.11:

/etc/bareos-webui/directors.ini

You can add as many directors as you want, also the same host with a different name and different catalog, if you

have multiple catalogs.

Since Version >= 16.2.2 you are able to configure some parameters of the Bareos Webui to your needs.

;

; Bareos WebUI Configuration File

;

; File: /etc/bareos-webui/configuration.ini

;

;------------------------------------------------------------------------------

; SESSION SETTINGS

;------------------------------------------------------------------------------

;

[session]

; Default: 3600 seconds

timeout=3600

;------------------------------------------------------------------------------

; DASHBOARD SETTINGS

;------------------------------------------------------------------------------

[dashboard]

; Autorefresh Interval

; Default: 60000 milliseconds

autorefresh_interval=60000

;------------------------------------------------------------------------------

; TABLE SETTINGS

;------------------------------------------------------------------------------

[tables]

; Possible values for pagination

; Default: 10,25,50,100

pagination_values=10,25,50,100

; Default number of rows per page

; for possible values see pagination_values

; Default: 25

pagination_default_value=25

; State saving - restore table state on page reload.

; Default: false

save_previous_state=false

;------------------------------------------------------------------------------

; VARIOUS SETTINGS

;------------------------------------------------------------------------------

[autochanger]

; Pooltype for label to use as filter.

; Default: none

labelpooltype=scratch

Configuration 3.12:

/etc/bareos-webui/configuration.ini

#

#

The Bareos Webui Director profile shipped with Bareos 15.2 (webuiDir

Profile in the file

/etc/bareos/bareos-dir.d/webui-profiles.conf) is not sufficient to use the Bareos Webui 16.2. This has

several reasons:

- The handling of acls is more strict in Bareos 16.2 than it has been in Bareos 15.2. Substring matching

is no longer enabled, therefore you need to change .bvfs_* to .bvfs_.* in your Command ACL Dir

Profile

to have a proper regular expression. Otherwise the restore module won’t work any longer, especially

the file browser.

- The Bareos Webui 16.2 uses following additional commands:

- .help

- .schedule

- .pools

- import

- export

- update

- release

- enable

- disable

If you used an unmodified /etc/bareos/bareos-dir.d/webui-profiles.conf file, the easiest way is to

overwrite it with the new profile file /etc/bareos/bareos-dir.d/profile/webui-admin.conf. The new

webui-adminDir

Profile allows all commands, except of the dangerous ones, see Configuration of profile

resources.

#

Since Version >= 16.2.0 it is possible to work with different catalogs. Therefore the catalog parameter has been

introduced. If you don’t set a catalog explicitly the default MyCatalogDir

Catalog will be used. Please see Configure your

/etc/bareos-webui/directors.ini for more details.

#

Since 16.2 the Bareos Webui introduced an additional configuration file besides the directors.ini file named

configuration.ini where you are able to adjust some parameters of the webui to your needs. Please see Configure

your /etc/bareos-webui/directors.ini for more details.

#

#

If you prefer to use Bareos Webui on Nginx with php5-fpm instead of Apache, a basic working configuration could

look like this:

server {

listen 9100;

server_name bareos;

root /var/www/bareos-webui/public;

location / {

index index.php;

try_files $uri $uri/ /index.php?$query_string;

}

location ~ .php$ {

include snippets/fastcgi-php.conf;

# php5-cgi alone:

# pass the PHP

# scripts to FastCGI server

# listening on 127.0.0.1:9000

#fastcgi_pass 127.0.0.1:9000;

# php5-fpm:

fastcgi_pass unix:/var/run/php5-fpm.sock;

# APPLICATION_ENV: set to ’development’ or ’production’

#fastcgi_param APPLICATION_ENV development;

fastcgi_param APPLICATION_ENV production;

}

}

Configuration 3.13:

bareos-webui on nginx

This will make the Bareos Webui accessible at http://bareos:9100/ (assuming your DNS resolve the hostname

bareos to the NGINX server).

#

In most cases, a Bareos update is simply done by a package update of the distribution. Please remind, that Bareos

Director and Bareos Storage Daemon must always have the same version. The version of the File Daemon may

differ, see chapter about ??.

#

When updating Bareos through the distribution packaging mechanism, the existing configuration kept as they

are.

If you don’t want to modify the behavior, there is normally no need to modify the configuration.

However, in some rare cases, configuration changes are required. These cases are described in the Release

Notes.

With Bareos version 16.2.4 the default configuration uses the Subdirectory Configuration Scheme. This scheme

offers various improvements. However, if your are updating from earlier versions, your existing single configuration

files (/etc/bareos/bareos-*.conf) stay in place and are contentiously used by Bareos. The new default

configuration resource files will also be installed (/etc/bareos/bareos-*.d/*/*.conf). However, they will only be

used, when the legacy configuration file does not exist.

See Updates from Bareos < 16.2.4 for details and how to migrate to Subdirectory Configuration Scheme.

#

Sometimes improvements in Bareos make it necessary to update the database scheme.

Please note! If the Bareos catalog database does not have the current schema, the Bareos Director refuses to

start.

Detailed information can then be found in the log file /var/log/bareos/bareos.log.

Take a look into the Release Notes to see which Bareos updates do require a database scheme update.

Please note! Especially the upgrade to Bareos ≥ 17.2.0 restructures the File database table. In larger

installations this is very time consuming and temporarily doubles the amount of required database disk

space.

#

Since Bareos Version >= 14.2.0 the Debian (and Ubuntu) based packages support the dbconfig-common mechanism

to create and update the Bareos database. If this is properly configured, the database schema will be automatically

adapted by the Bareos packages.

Please note! When using the PostgreSQL backend and updating to Bareos < 14.2.3, it is necessary to manually grant

database permissions, normally by using

root@linux:~#

su - postgres -c /usr/lib/bareos/scripts/grant_bareos_privileges

Commands 4.1:

For details see dbconfig-common (Debian).

If you disabled the usage of dbconfig-common, follow the instructions for Other Platforms.

#

This has to be done as database administrator. On most platforms Bareos knows only about the credentials to

access the Bareos database, but not about the database administrator to modify the database schema.

The task of updating the database schema is done by the script /usr/lib/bareos/scripts/update_bareos_tables.

However, this script requires administration access to the database. Depending on your distribution

and your database, this requires different preparations. More details can be found in chapter Catalog

Maintenance.

Please note! If you’re updating to Bareos <= 13.2.3 and have configured the Bareos database during install using

Bareos environment variables (db_name, db_user or db_password, see Catalog Maintenance), make sure to have

these variables defined in the same way when calling the update and grant scripts. Newer versions of Bareos read

these variables from the Director configuration file /etc/bareos/bareos-dir.conf. However, make sure that the

user running the database scripts has read access to this file (or set the environment variables). The postgres user

normally does not have the required permissions.

If your are using PostgreSQL and your PostgreSQL administrator is postgres (default), use following

commands:

su postgres -c /usr/lib/bareos/scripts/update_bareos_tables

su postgres -c /usr/lib/bareos/scripts/grant_bareos_privileges

Commands 4.2:

Update PostgreSQL database schema

The grant_bareos_privileges command is required, if new databases tables are introduced. It does not hurt to

run it multiple times.

After this, restart the Bareos Director and verify it starts without problems.

Make sure, that root has direct access to the local MySQL server. Check if the command mysql without parameter

connects to the database. If not, you may be required to adapt your local MySQL configuration file ~/.my.cnf. It

should look similar to this:

[client]

host=localhost

user=root

password=YourPasswordForAccessingMysqlAsRoot

Configuration 4.3:

MySQL

credentials

file

.my.cnf

If you are able to connect via the mysql to the database, run the following script from the Unix prompt:

/usr/lib/bareos/scripts/update_bareos_tables

Commands 4.4:

Update MySQL database schema

Currently on MySQL is it not necessary to run grant_bareos_privileges, because access to the database is

already given using wildcards.

After this, restart the Bareos Director and verify it starts without problems.

#

#

In order to make Bareos as flexible as possible, the directions given to Bareos are specified in several pieces. The

main instruction is the job resource, which defines a job. A backup job generally consists of a FileSet, a Client, a

Schedule for one or several levels or times of backups, a Pool, as well as additional instructions. Another way of

looking at it is the FileSet is what to backup; the Client is who to backup; the Schedule defines when, and the Pool

defines where (i.e. what Volume).

Typically one FileSet/Client combination will have one corresponding job. Most of the directives, such as FileSets,

Pools, Schedules, can be mixed and matched among the jobs. So you might have two different Job

definitions (resources) backing up different servers using the same Schedule, the same Fileset (backing

up the same directories on two machines) and maybe even the same Pools. The Schedule will define

what type of backup will run when (e.g. Full on Monday, incremental the rest of the week), and when

more than one job uses the same schedule, the job priority determines which actually runs first. If you

have a lot of jobs, you might want to use JobDefs, where you can set defaults for the jobs, which

can then be changed in the job resource, but this saves rewriting the identical parameters for each

job. In addition to the FileSets you want to back up, you should also have a job that backs up your

catalog.

Finally, be aware that in addition to the backup jobs there are restore, verify, and admin jobs, which have different

requirements.

#

If you have been using a program such as tar to backup your system, Pools, Volumes, and labeling may be a

bit confusing at first. A Volume is a single physical tape (or possibly a single file) on which Bareos

will write your backup data. Pools group together Volumes so that a backup is not restricted to the

length of a single Volume (tape). Consequently, rather than explicitly naming Volumes in your Job,

you specify a Pool, and Bareos will select the next appendable Volume from the Pool and mounts

it.

Although the basic Pool options are specified in the Director’s Pool resource, the real Pool is maintained in the

Bareos Catalog. It contains information taken from the Pool resource (configuration file) as well as information on

all the Volumes that have been added to the Pool.

For each Volume, Bareos maintains a fair amount of catalog information such as the first write date/time, the last

write date/time, the number of files on the Volume, the number of bytes on the Volume, the number of Mounts,

etc.

Before Bareos will read or write a Volume, the physical Volume must have a Bareos software label so that Bareos

can be sure the correct Volume is mounted. Depending on your configuration, this is either done automatically by

Bareos or manually using the label command in the Console program.

The steps for creating a Pool, adding Volumes to it, and writing software labels to the Volumes, may seem tedious

at first, but in fact, they are quite simple to do, and they allow you to use multiple Volumes (rather than being

limited to the size of a single tape). Pools also give you significant flexibility in your backup process. For example,

you can have a ”Daily” Pool of Volumes for Incremental backups and a ”Weekly” Pool of Volumes for Full backups.

By specifying the appropriate Pool in the daily and weekly backup Jobs, you thereby insure that no daily Job ever

writes to a Volume in the Weekly Pool and vice versa, and Bareos will tell you what tape is needed and

when.

For more on Pools, see the Pool Resource section of the Director Configuration chapter, or simply read on, and we

will come back to this subject later.

#

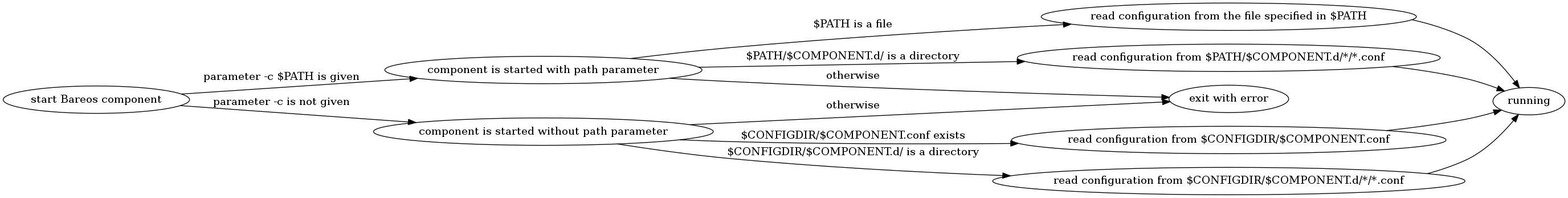

On Unix, Bareos configuration files are usually located in the /etc/bareos/ directory and are named accordingly to

the programs that use it. Since Bareos Version >= 16.2.4 the default configuration is stored as one file per resource

in subdirectories under bareos-dir.d, bareos-sd.d or bareos-fd.d. For details, see Customizing the

Configuration and Subdirectory Configuration Scheme.

#

You can test if your configuration file is syntactically correct by running the appropriate daemon with the -t option.

The daemon will process the configuration file and print any error messages then terminate.

As the Bareos Director and Bareos Storage Daemon runs as user bareos, testing the configuration should be done

as bareos.

This is especially required to test the Bareos Director, as it also connects to the database and checks if the catalog

schema version is correct. Depending on your database, only the bareos has permission to access

it.

su bareos -s /bin/sh -c "/usr/sbin/bareos-dir -t"

su bareos -s /bin/sh -c "/usr/sbin/bareos-sd -t"

bareos-fd -t

bconsole -t

bareos-tray-monitor -t

Commands 5.1:

Testing Configuration Files

#

This chapter will guide you through running Bareos. To do so, we assume you have installed Bareos.

However, we assume that you have not modified the configuration. The examples in this chapter use the

default configuration files and will write the volumes to disk in your /var/lib/bareos/storage/

directory.

The general flow of running Bareos is:

- Start the Database (if using PostgreSQL or MySQL/MariaDB)

- Installing Bareos

- Start the Bareos Daemons

- Start the Console program to interact with the Bareos Director

- Run a job

- Test recovering some files from the Volume just written to ensure the backup is good and that you

know how to recover. Better test before disaster strikes

- Add a second client.

Each of these steps is described in more detail below.

#

If you are using PostgreSQL or MySQL/MariaDB as the Bareos database, you should start it before you install

Bareos. If you are using Sqlite you need do nothing. Sqlite is automatically started by the Bareos

Director.

#

For installing Bareos, follow the instructions from the Installing Bareos chapter.

#

Assuming you have installed the packages, to start the three daemons, from your installation directory, simply

enter:

service bareos-dir start

service bareos-sd start

service bareos-fd start

bconsole 6.1:

start services

#

To communicate with the Bareos Director and to query the state of Bareos or run jobs, the bconsole program can

be used as a textual interface. Alternatively, for most purposes, also the Bareos Webui can be used, but for

simplicity, here we will describe only the bconsole program.

The bconsole runs the Bareos Console program, which connects to the Bareos Director. Since Bareos is a network

program, you can run the Console program anywhere on your network. Most frequently, however, one runs it on the

same machine as the Bareos Director. Normally, the Console program will print something similar to the

following:

root@linux:~#

bconsole

Connecting to Director bareos:9101

Enter a period to cancel a command.

*

Commands 6.2:

bconsole

The asterisk is the console command prompt.

Type help to see a list of available commands:

*help

Command Description

======= ===========

add Add media to a pool

autodisplay Autodisplay console messages

automount Automount after label

cancel Cancel a job

create Create DB Pool from resource

delete Delete volume, pool or job

disable Disable a job

enable Enable a job

estimate Performs FileSet estimate, listing gives full listing

exit Terminate Bconsole session

export Export volumes from normal slots to import/export slots

gui Non-interactive gui mode

help Print help on specific command

import Import volumes from import/export slots to normal slots

label Label a tape

list List objects from catalog

llist Full or long list like list command

messages Display pending messages

memory Print current memory usage

mount Mount storage

move Move slots in an autochanger

prune Prune expired records from catalog

purge Purge records from catalog

quit Terminate Bconsole session

query Query catalog

restore Restore files

relabel Relabel a tape

release Release storage

reload Reload conf file

rerun Rerun a job

run Run a job

status Report status

setbandwidth Sets bandwidth

setdebug Sets debug level

setip Sets new client address -- if authorized

show Show resource records

sqlquery Use SQL to query catalog

time Print current time

trace Turn on/off trace to file

unmount Unmount storage

umount Umount - for old-time Unix guys, see unmount

update Update volume, pool or stats

use Use specific catalog

var Does variable expansion

version Print Director version

wait Wait until no jobs are running

bconsole 6.3:

help

Details of the console program’s commands are explained in the Bareos Console chapter.

#

At this point, we assume you have done the following:

- Started the Database

- Installed Bareos

- Prepared the database for Bareos

- Started Bareos Director, Storage Daemon and File Daemon

- Invoked the Console program with bconsole

Furthermore, we assume for the moment you are using the default configuration files.

At this point, enter the show filesets and you should get something similar this:

*show filesets

...

FileSet {

Name = "SelfTest"

Include {

Options {

Signature = MD5

}

File = "/usr/sbin"

}

}

FileSet {

Name = "Catalog"

Include {

Options {

Signature = MD5

}

File = "/var/lib/bareos/bareos.sql"

File = "/etc/bareos"

}

}

...

bconsole 6.4:

show filesets

One of the FileSets is the pre-defined SelfTestDir

FileSet FileSet that will backup the /usr/sbin directory. For testing

purposes, we have chosen a directory of moderate size (about 30 Megabytes) and complexity without being too big.

The FileSet CatalogDir

FileSet is used for backing up Bareos’s catalog and is not of interest to us for the moment. You

can change what is backed up by editing the configuration and changing the File = line in the FileSetDir

resource.

Now is the time to run your first backup job. We are going to backup your Bareos source directory to

a File Volume in your /var/lib/bareos/storage/ directory just to show you how easy it is. Now

enter:

*status dir

bareos-dir Version: 13.2.0 (09 April 2013) x86_64-pc-linux-gnu debian Debian GNU/Linux 6.0 (squeeze)

Daemon started 23-May-13 13:17. Jobs: run=0, running=0 mode=0

Heap: heap=270,336 smbytes=59,285 max_bytes=59,285 bufs=239 max_bufs=239

Scheduled Jobs:

Level Type Pri Scheduled Name Volume

===================================================================================

Incremental Backup 10 23-May-13 23:05 BackupClient1 testvol

Full Backup 11 23-May-13 23:10 BackupCatalog testvol

====

Running Jobs:

Console connected at 23-May-13 13:34

No Jobs running.

====

bconsole 6.5:

status dir

where the times and the Director’s name will be different according to your setup. This shows that an Incremental

job is scheduled to run for the Job BackupClient1Dir

Job at 1:05am and that at 1:10, a BackupCatalogDir

Job is scheduled

to run.

Now enter:

*status client

Automatically selected Client: bareos-fd

Connecting to Client bareos-fd at bareos:9102

bareos-fd Version: 13.2.0 (09 April 2013) x86_64-pc-linux-gnu debian Debian GNU/Linux 6.0 (squeeze)

Daemon started 23-May-13 13:17. Jobs: run=0 running=0.

Heap: heap=135,168 smbytes=26,000 max_bytes=26,147 bufs=65 max_bufs=66

Sizeof: boffset_t=8 size_t=8 debug=0 trace=0 bwlimit=0kB/s

Running Jobs:

Director connected at: 23-May-13 13:58

No Jobs running.

====

bconsole 6.6:

status client

In this case, the client is named bareos-fdDir

Client your name might be different, but the line beginning

with bareos-fd Version is printed by your Bareos File Daemon, so we are now sure it is up and

running.

Finally do the same for your Bareos Storage Daemon with:

*status storage

Automatically selected Storage: File

Connecting to Storage daemon File at bareos:9103

bareos-sd Version: 13.2.0 (09 April 2013) x86_64-pc-linux-gnu debian Debian GNU/Linux 6.0 (squeeze)

Daemon started 23-May-13 13:17. Jobs: run=0, running=0.

Heap: heap=241,664 smbytes=28,574 max_bytes=88,969 bufs=73 max_bufs=74

Sizes: boffset_t=8 size_t=8 int32_t=4 int64_t=8 mode=0 bwlimit=0kB/s

Running Jobs:

No Jobs running.

====

Device status:

Device "FileStorage" (/var/lib/bareos/storage) is not open.

==

====

Used Volume status:

====

====

bconsole 6.7:

status storage

You will notice that the default Bareos Storage Daemon device is named FileDir

Storage and that it will use device

/var/lib/bareos/storage, which is not currently open.

Now, let’s actually run a job with:

run

bconsole 6.8:

run

you should get the following output:

Automatically selected Catalog: MyCatalog

Using Catalog "MyCatalog"

A job name must be specified.

The defined Job resources are:

1: BackupClient1

2: BackupCatalog

3: RestoreFiles

Select Job resource (1-3):

bconsole 6.9:

select job

Here, Bareos has listed the three different Jobs that you can run, and you should choose number 1 and type enter,

at which point you will get:

Run Backup job

JobName: BackupClient1

Level: Incremental

Client: bareos-fd

Format: Native

FileSet: SelfTest

Pool: Full (From Job resource)

NextPool: *None* (From unknown source)

Storage: File (From Job resource)

When: 2013-05-23 14:50:04

Priority: 10

OK to run? (yes/mod/no):

bconsole 6.10:

run job

At this point, take some time to look carefully at what is printed and understand it. It is asking you if it is OK to

run a job named BackupClient1Dir

Job with FileSet SelfTestDir

FileSet as an Incremental job on your Client, and to use

Storage FileDir

Storage and Pool FullDir

Pool, and finally, it wants to run it now (the current time should be displayed by

your console).

Here we have the choice to run (yes), to modify one or more of the above parameters (mod), or to not run

the job (no). Please enter yes, at which point you should immediately get the command prompt (an

asterisk).

If you wait a few seconds, then enter the command messages you will get back something like:

TODO: Replace bconsole output by current version of Bareos.

*messages

28-Apr-2003 14:30 bareos-sd: Wrote label to prelabeled Volume

"TestVolume001" on device /var/lib/bareos/storage

28-Apr-2003 14:30 rufus-dir: Bareos 1.30 (28Apr03): 28-Apr-2003 14:30

JobId: 1

Job: BackupClient1.2003-04-28_14.22.33

FileSet: Full Set

Backup Level: Full

Client: bareos-fd

Start time: 28-Apr-2003 14:22

End time: 28-Apr-2003 14:30

Files Written: 1,444

Bytes Written: 38,988,877

Rate: 81.2 KB/s

Software Compression: None

Volume names(s): TestVolume001

Volume Session Id: 1

Volume Session Time: 1051531381

Last Volume Bytes: 39,072,359

FD termination status: OK

SD termination status: OK

Termination: Backup OK

28-Apr-2003 14:30 rufus-dir: Begin pruning Jobs.

28-Apr-2003 14:30 rufus-dir: No Jobs found to prune.

28-Apr-2003 14:30 rufus-dir: Begin pruning Files.

28-Apr-2003 14:30 rufus-dir: No Files found to prune.

28-Apr-2003 14:30 rufus-dir: End auto prune.

bconsole 6.11:

run

If you don’t see the output immediately, you can keep entering messages until the job terminates.

Instead of typing messages multiple times, you can also ask bconsole to wait, until a specific job is

finished:

*wait jobid=1

bconsole 6.12:

wait

or just wait , which waits for all running jobs to finish.

Another useful command is autodisplay on. With autodisplay activated, messages will automatically be displayed

as soon as they are ready.

If you do an ls -l of your /var/lib/bareos/storage directory, you will see that you have the following

item:

-rw-r----- 1 bareos bareos 39072153 Apr 28 14:30 Full-001

bconsole 6.13:

volume

This is the file Volume that you just wrote and it contains all the data of the job just run. If you run additional jobs,

they will be appended to this Volume unless you specify otherwise.